☕ Welcome to The Coder Cafe! In a previous post, I briefly touched on systems thinking after reading Learning Systems Thinking. My honest take: it was an interesting introduction, but I wasn’t fully convinced. The concepts felt abstract, the examples too sparse. Then I read Thinking in Systems by Donella Meadows. It might be one of the best books I’ve read in my career (and it’s not even a computer science book). This post is my own introduction to the core concepts, grounded in a real example from my experience. Get cozy, grab a coffee, and let’s begin!

Introduction

Have you ever fixed an incident, only to see it come back two weeks later? Or made a change that improved one metric while quietly degrading another? Or spent months firefighting without ever feeling like things were actually getting better?

These aren’t signs of bad engineering. They’re signs of reacting to events without understanding the structures that produce them.

Understanding those structures requires a different kind of thinking, and that’s exactly what systems thinking is: the ability to shift from reacting to events through responsive patterns of behaviors to generating improved systemic structures.

This post is an introduction to systems thinking, covering the core concepts through a real example from my experience at Google.

What Is a System?

First, let’s define what a system is. In essence, a system is:

A set of elements

Interconnected

To achieve something

Distributed systems are an obvious example. For example, a 3-node, single leader database is composed of:

3 nodes (elements)

Connections from the leader to the replicas (interconnections)

With the goal of storing data reliably over time

Interestingly, this is why distributed systems can surprise even their own designers: add enough nodes, replication lag, and competing writes, and the system starts behaving in ways no single component would predict.

Stocks and Flows

To reason about how systems change over time, we need two important concepts:

A stock is an accumulation of material or information that has built up in a system over time. For example: the number of machines available in a cluster, the size of a message queue, the amount of technical debt in a codebase.

A flow is what changes a stock: material or information entering or leaving it. For example: machines being added or removed from service, messages being enqueued and consumed, or requests being received and processed.

The key thing to keep in mind: stocks take time to change because flows take time to flow. You can’t instantly restore machine availability or drain a queue with a single action. This has real consequences for how systems behave under pressure. We will come back to it.

Feedback Loops

One of the most important concepts in systems thinking is the feedback loop.

A feedback loop is what the system does automatically because its own result feeds back into it. Said differently: If A causes B, then B influences A.

Let’s take a concrete example. Suppose you live in a house with a central thermostat set at 20°C. It turns the heating on when the temperature drops to 19°C, and off when it reaches 21°C.

The feedback loop works like this:

A: Temperature changeB: Thermostat turns heating on or off

The thermostat turning on or off (B) is caused by the temperature change (A). But the temperature change (A) is in turn influenced by the thermostat (B). Each effect feeds back into its own cause. This is a feedback loop.

Balancing vs. Reinforcing Feedback Loops

There are two kinds of feedback loops.

A balancing feedback loop resists change: It pushes the system back toward a goal or limit. Think of it as a stabilizer: when something moves away from the target, the loop acts to bring it back. The thermostat is a perfect example. As the temperature drifts away from 20°C, the thermostat reacts, and the system returns to equilibrium.

A reinforcing feedback loop amplifies change: More leads to more, less leads to less. An action produces a result that drives more of the same action, generating growth or decline at an accelerating rate. The YouTube algorithm is a clear illustration: the more a video is viewed, the more the algorithm surfaces it; the more it’s surfaced, the more views it gets.

More formally, we can have 4 cases of feedback loops:

Balancing ceiling: If

A↑causesB↓, thenB↓influencesA↑Balancing floor: If

A↓causesB↑, thenB↑influencesA↓Reinforcing growth: If

A↑causesB↑, thenB↑influencesA↑Reinforcing collapse: If

A↓causesB↓, thenB↓influencesA↓

The more feedback loops a system contains, the more complex and surprising its behavior becomes, especially when those loops interact.

Delays

An often overlooked but critical property of feedback loops is the delay between an action and its effects.

Delays are pervasive in systems and strong determinants of behavior. When the gap between action and effect is long, two things happen:

Foresight becomes essential: Acting only when a problem becomes obvious means missing the window to address it early.

Oscillations become likely: We overreact because the system hasn’t had time to respond, then overreact again in the other direction.

Think of an autoscaler that takes 3 minutes to provision new instances. By the time the new capacity is ready, the traffic spike has already peaked. The window to act had opened before the problem was even visible on the dashboard. This is why foresight matters: when there is a significant delay between action and effect, reacting to what you see now means always acting too late.

And the consequences compound. The autoscaler, still responding to the old signal, overshoots. Then it sees too much capacity and scales down, right before the next spike arrives. One example, two problems: a system that needed foresight got a reaction, and then oscillated because of it.

The delay didn’t change the goal. It made the system work against itself.

System Boundaries

System boundaries are artificial. They help us frame a problem, but in reality, everything is interconnected. The boundaries we draw determine what we see and, therefore, what we miss.

Consider a microservices architecture in which each team owns a service. Every team has solid SLOs, careful on-call rotations, and clean dashboards. And yet end-to-end latency keeps creeping up, and users are complaining. Each team looks at its own service and sees green. The problem is that the boundary is wrong; no one is looking at the system as a whole.

This is one of the most common traps in engineering: optimizing within a boundary while the real issue lives outside it. Before changing a system, it is worth asking: Am I looking at the right boundary?

The Iceberg Model

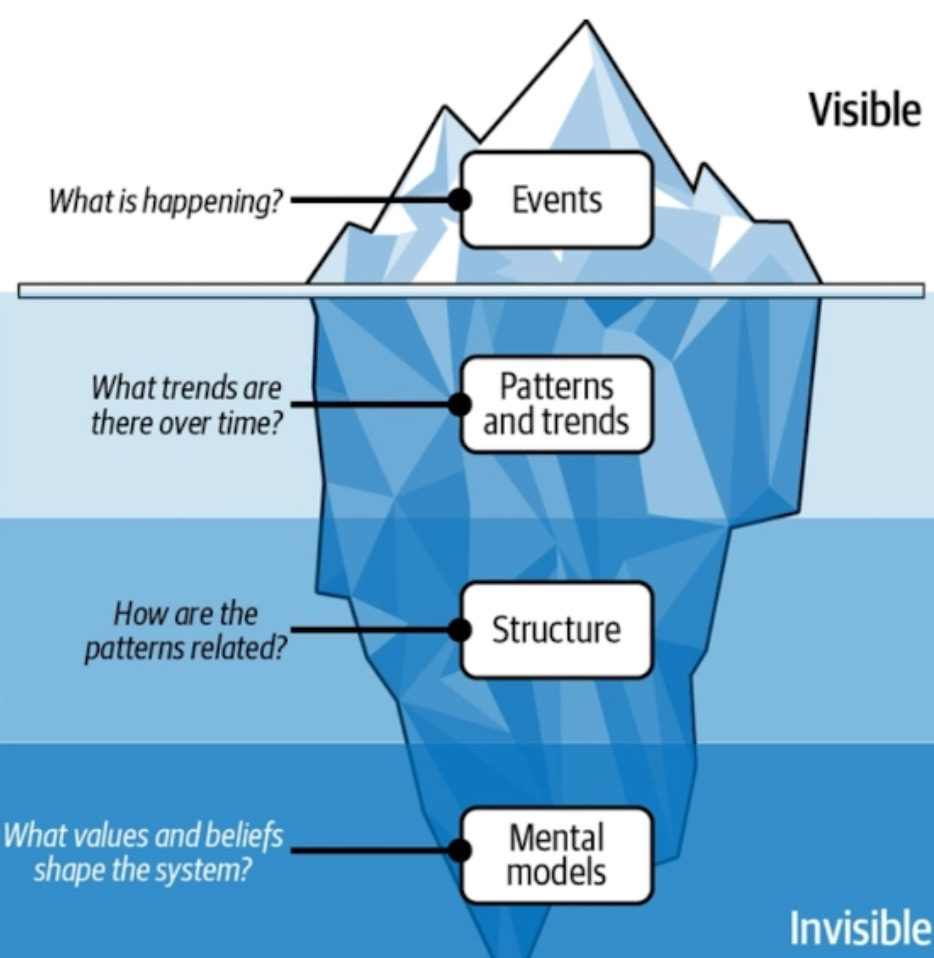

When something goes wrong in a system, what do we actually see? Usually just the surface: an incident, a spike, an outage. The iceberg model gives us a way to think beneath it.

The model has four levels:

Events are what’s visible: the incident alert, the latency spike on the dashboard. This is where most of our attention goes, and where reactive thinking lives.

Patterns and trends are what you find when you zoom out. Has this happened before? At what frequency? Under what circumstances? Patterns reveal that what felt like a one-off event is actually part of a larger rhythm.

Structure is the underlying system design: the feedback loops, the incentives, the processes that produce the patterns. You can’t fix a pattern without understanding the structure that generates it.

Mental models are the beliefs and assumptions that shaped the structure in the first place. They’re the hardest to see and the hardest to change.

Most incident response lives at the event level. Systems thinking asks us to go deeper. As an SRE, this model resonates: we’re trained not just to react to incidents but to understand the why: the patterns, the structures, and eventually the assumptions that caused them.

A Concrete Example: Safe Removal Service

Let me now bring all of these concepts together through a concrete example from my previous role at Google, where I worked on the systems powering Google’s ML infrastructure.

I was heavily focused on a system called the Safe Removal Service1 (SRS). This service had a simple API and one core responsibility: to say yes or no when another system requested permission to disrupt a given entity. Indeed, most disruptive services at Google, the ones that reboot machines, drain jobs, or take clusters offline, were designed to ask this service before acting.

In our context, the key constraint was preserving capacity, meaning ML TPUs and GPUs. For example, within a given cluster, at least 90% of TPUs must remain available at all times. So if 95% were currently available, SRS could approve disruptions, as long as availability didn’t drop below 90%.

NOTE: The threshold values and other details have been altered for confidentiality reasons.

The API was deliberately simple:

“Can I reboot this machine?” → Yes/No

“Can I drain this job?” → Yes/No

“Can I take down this cluster?” → Yes/No

Identifying the Feedback Loops

SRS implemented several balancing feedback loops. For example, when available capacity dropped toward 90%, the service would start refusing disruptive requests, pushing availability back up. This was the primary loop: a governor that kept the system in a safe zone.

There was also an implicit reinforcing loop on the positive side: by allowing maintenance to proceed when capacity was healthy, the service enabled machines to be upgraded, patched, and kept in good shape, which in turn kept capacity high.

So far, so good. But here’s where it gets interesting.

The Hidden Reinforcing Loops

The balancing loop protected current capacity. What it didn’t account for was what happened when capacity was already constrained.

When available capacity hovered near 90%, SRS would block most maintenance requests. Machines couldn’t be patched. Hardware with known error trends couldn’t be swapped. Security upgrades were deferred. Maintenance debt accumulated, silently, invisibly.

This created a first hidden reinforcing loop:

Less capacity → Deferred maintenance → More failures → Even less capacity

The balancing loop was actively feeding the very problem it was trying to prevent.

A second reinforcing loop emerged from human behavior:

Low capacity → More incidents → Bypass mechanisms invoked → Riskier actions taken → Capacity lower still

When the system was under stress, operators would sometimes override SRS to unblock critical work. Each bypass, reasonable in isolation, eroded the safety margins that the balancing loop was designed to protect.

The Goal Was Inaccurately Defined

There’s a principle from Thinking in Systems that describes this precisely:

System behavior is particularly sensitive to the goals of feedback loops. If the goals—the indicators of satisfaction of the rules—are defined inaccurately or incompletely, the system may obediently work to produce a result that is not really intended or wanted.

Specify indicators and goals that reflect the real welfare of the system. Be especially careful not to confuse effort with result or you will end up with a system that is producing effort, not result.

SRS was measuring the right-looking metric: current capacity. But the current capacity was not the same as the real health. A cluster at 92% availability, accumulating maintenance debt and hardware errors, was far more fragile than a cluster at 91% that was fully patched and stable. The balancing loop couldn’t tell the difference.

The deeper fix wasn’t just tuning the threshold. It was making the controller health-aware, not just capacity-aware. Rather than gating only on “% available right now,” the system needed to incorporate slow indicators: maintenance backlog growth rate, share of fleet on known-bad firmware versions, hardware error trendlines, override and bypass rates.

By the time the reinforcing loops made their effects visible, the stock (cluster health) had already been degrading for weeks. The delay between cause and effect made the problem invisible until it was expensive to fix. This example was not about a flawed design. It was about a structure that, taken as a whole, was quietly working against itself.

Summary

A system is a set of elements interconnected to achieve a goal.

Stocks are accumulations that change over time through flows; stocks take time to change.

A feedback loop occurs when an effect feeds back into its own cause.

Balancing feedback loops resist change and push toward equilibrium; reinforcing feedback loops amplify change.

Delays between action and effect can cause oscillations and make problems invisible until too late.

System boundaries are artificial; the boundary we draw determines what we see and miss.

The iceberg model: events are visible, but patterns, structure, and mental models lie beneath.

System goals must reflect real welfare, not just what’s measurable; inaccurate goals lead to unwanted behaviors.

A well-designed balancing loop can mask hidden reinforcing dynamics. The most dangerous moment is when a system appears to be working.

Resources

More From the Distributed Systems Category

Sources

Explore Further

❤️ If you enjoyed this post, please hit the like button.

💬 Have you ever built or maintained a system that looked healthy on the dashboard while something was quietly accumulating underneath?

I already mentioned that service in a previous post. You can find more information in this whitepaper: VM Live Migration At Scale.